Recently I came across a brief statement on Facebook about the well-explained evils of stack ranking, with a half-hearted Devil’s Advocate argument about how impact can absolutely be an objective metric, measured and ranked[1]. I still think this is completely wrong; this post is my attempt to further expand my thoughts.

To start, workers are multi-dimensional and multi-faceted in skill; their varied abilities are what enables companies to build and sustain their businesses. In fact, this belief is one of the cornerstones of diversity advocates: a diverse set of people, each with their unique perspectives and strengths, make better decisions. The tech industry’s recent fixation on diversity is one part rectifying an oversight, one part building better business.

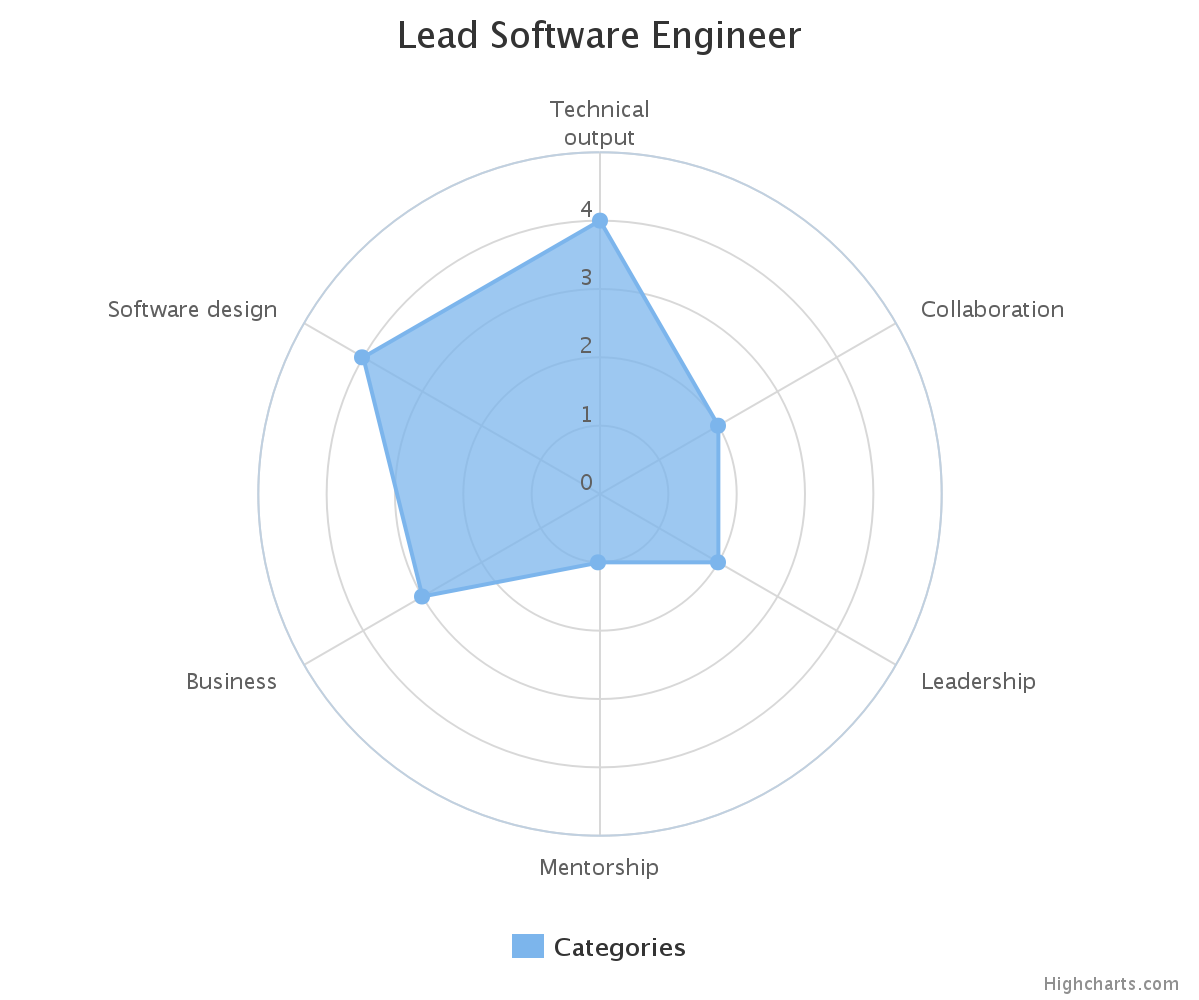

The true measurement of an employee, then, requires judging abilities on multiple axes. Whenever I think about this, I picture a polar chart of categories with scores from 1 to 5, with profiles approximated by the general geometric shape of the graph. For instance, a “lone wolf” senior software engineer may look like this:

Here, some of the axes end up being engineering specific, but higher level categories can be used so that the chart can be applied across multiple roles and disciplines.

In this model, stack ranking is a lossy compression mechanism. Specifically, it seeks to distill what is already a heuristic of someone’s skillset further into a single value, which then can be measured against others’ values. Whenever we talk ourselves into comparing persons A and B — on whether someone’s contributions here are more or less meaningful than someone else’s contributions there — what we’re really doing is adjusting weights on an implicit linear equation which sums up to that singular value.

Whether it’s based on “impact” or some other measurement, my main issue with stack ranking is that there’s no objective or even easy way to divine this equation without including a truckload of hidden bias. That graph above already has a handful of categories which reflect my own biases, and I can’t think of a decent way to turn it into a singular, comparable number without further losing what little nuance it’s trying to communicate. A ranked list of employees or teams implies some measure of objectivity, but the process requires additional, unacknowledged functions biased towards the rankers. It adds an entirely new layer of subjectivity on top of an already subjective set of judgements.

There is an analogous realm of discussion where ranking is a cultural norm: professional sports. In the modern era of sports, fans are obsessed with rankings, despite the fact that no one actually agrees on what criteria make up “the best”. They argue about top players, top teams, top playoff series, etc. with completely subjective points, important mostly to the fan arguing. Almost all of the discussion revolves around the fact that there’s no common basis of comparison, so there can never be actual agreement. It’s one of the reasons why sports talk always ends up in circles.

Going back to stack-rank-based management, basing personnel decisions on that system is dangerous. Business requirements change, and the skills — and people — that a team needs shifts from quarter to quarter and project to project. The people and teams best equipped to tackle a changing environment feature diverse aptitudes, and it’d be a disservice to truncate that richness of disciplines into a solitary rank.

With the implication that those at the tail end are let go, or at least given a stern talking to. ↩︎